From Machine Learning to Agentic AI: Are We Repeating the Same Mistake?

By Nikhil Singh

A team spends six months building an AI agent to handle customer refunds. The system plans, reasons, calls APIs, and adapts dynamically. It’s impressive. But when deployed, a simple alternative of a 10-line SQL query and a three-step decision tree turns out to be twice as fast, ten times cheaper, and far easier to maintain.

The agent works. It just wasn’t needed.

We’ve seen this pattern before. First, it was machine learning. Then deep learning. Then generative AI. And now, increasingly, agentic AI. Each wave brings real breakthroughs, but also a familiar tendency: mapping every problem to the newest paradigm.

We are currently entering the overextension phase of the agentic cycle.

The industry is treating agents as a universal solution. But autonomy is not a feature you add, it is a trade-off you accept. You trade predictability for flexibility. You trade latency for capability. If your problem doesn’t require that flexibility, you are simply buying cost and complexity for no reason.

The question is not whether agentic AI is valuable. It is. The question is whether we are using it with enough discipline.

We are deploying autonomy faster than we are defining its boundaries.

The Pattern We Keep Repeating

A few years ago, teams attempted to solve fundamentally simple problems with machine learning models.

We built classifiers where deterministic rules would suffice. We trained models on datasets too small to justify statistical learning. We optimized pipelines where the cost of maintaining the model exceeded its benefit.

In hindsight, the issue wasn’t capability, it was alignment.

Just as we once used neural networks for linear regressions, we are now using LLM agents for static API orchestration.

And sometimes, the motivation isn’t even technical. I call it resume-driven development—the tendency to adopt the latest paradigm not because the problem requires it but because it signals relevance. Agentic AI becomes a line item before it becomes a necessity.

We are deploying autonomy faster than we are defining its boundaries.

What Makes Agentic AI Different and Powerful

Agentic systems introduce something fundamentally new: autonomy in decision-making and execution.

Instead of generating outputs, they:

- Plan multi-step workflows

- Interact with tools and environments

- Adapt dynamically to feedback

- Operate under partial information

This makes them uniquely powerful for:

- Open-ended tasks

- Multi-step reasoning problems

- Dynamic and uncertain environments

Used correctly, agents reduce cognitive overhead and unlock new classes of applications. But that same flexibility introduces risk.

SUBSCRIBE TO OUR FREE MONTHLY NEWSLETTER

When Autonomy Becomes Over-Engineering

Not every problem benefit from autonomy.

Many business problems are:

- Deterministic

- Stable

- Low in ambiguity

- Sensitive to errors

In such cases, introducing an agent adds:

- Latency

- Compute cost

- Non-deterministic behavior

- Debugging complexity

A structured workflow, rules engine, or simple analytical system will often outperform an agent, not in sophistication, but in reliability and efficiency.

The issue is not that agents fail. It’s that they succeed in solving problems that didn’t require them.

The Hidden Cost of “Intelligent” Systems

The cost of overengineering AI systems is rarely visible upfront. Agentic systems introduce multiple layers of complexity:

- Prompt design and control flow

- Tool integration and fallback handling

- Memory and context management

- Evaluation and monitoring challenges

Unlike traditional systems, where behavior is predictable, agents operate probabilistically. This expands the “test surface” dramatically. One emerging challenge is where the behavior of an agent subtly changes over time as prompts evolve, tools are updated, or context windows shift. In traditional machine learning systems, we deal with data drift which is measurable and can be monitored through metrics like accuracy or distribution shifts. In contrast, agentic systems introduce reasoning drift, a behavioral shift in how decisions are made.

This makes them significantly harder to bound, test, and verify in production environments, especially as systems grow more complex.

There is also an organizational cost. Teams invest in autonomy infrastructure before validating whether autonomy is needed. This delays time-to-value and creates long-term maintenance burdens, especially in early-stage environments where simplicity compounds faster than complexity.

Autonomy Versus Orchestration

The most important distinction in modern AI systems is not between models, it is between autonomy and orchestration.

- Autonomy: The system decides what to do and how to do it

- Orchestration: The system follows a structured, predefined sequence

Many real-world applications benefit more from orchestration than autonomy. Consider:

- Customer onboarding flows

- Marketing campaign pipelines

- Internal reporting systems

These systems require coordination, not exploration. Introducing full autonomy into structured environments often reduces clarity without adding capability.

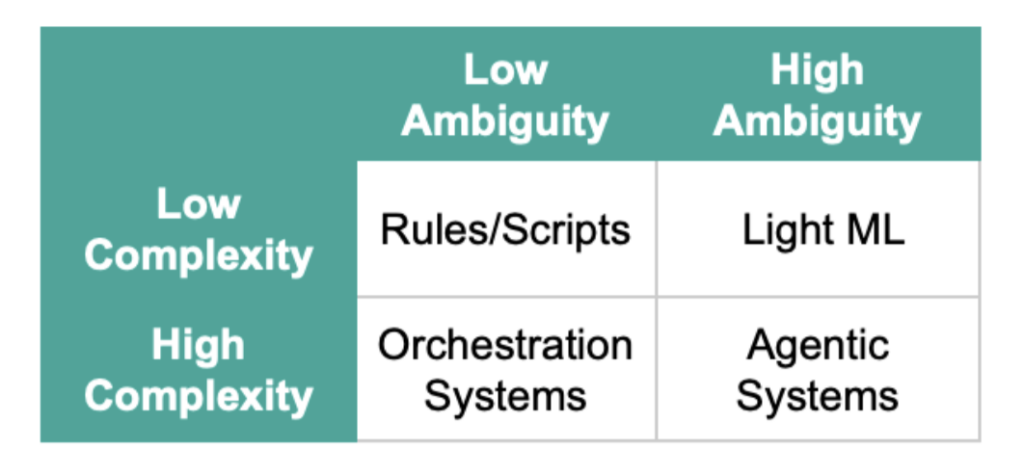

A Simple Mental Model: Complexity vs. Ambiguity

One way to decide whether to use an agent is to map the problem along two dimensions:

- Complexity: Number of steps, dependencies, integrations

- Ambiguity: Uncertainty in inputs, goals, or environment

Agents are most valuable in the high-complexity, high-ambiguity quadrant. Outside of that, simpler systems often win.

The Maturity Curve of AI Adoption

Every technology follows a similar trajectory:

Exploration → Overextension → Correction → Stabilization

Agentic AI is currently transitioning from exploration to overextension. The next phase will bring a correction, where teams distinguish between:

- Problems that require autonomy

- Problems that benefit from structure

The organizations and teams that navigate this well will not be those using the most advanced systems but those using the right systems.

The challenge for leaders today is not to adopt every new paradigm but to make deliberate choices of prioritizing outcomes over novelty and value over architectural complexity.

Designing for Value, Not Novelty

The real promise of AI is not in building the most complex system possible. It is in building systems that deliver meaningful outcomes. Agentic AI will be a critical part of that future, but only when applied with intent.

Because in the end, the most intelligent system is not the one that can do everything, it is the one that does the right thing, simply and reliably. The challenge for leaders today is not to adopt every new paradigm but to make deliberate choices of prioritizing outcomes over novelty and value over architectural complexity.

Share this on:

About Nikhil Singh

Nikhil Singh works on applied AI and data systems at Amazon Ads, where he focuses on audience intelligence and scalable decision-making. Over the past decade, he has built data-driven solutions across organizations like Amazon and Dell. He is currently exploring how emerging AI paradigms, including agentic systems, fit into real-world workflows.